Bad buzz : real examples and key lessons

We often think a bad buzz starts with a brand mistake: an awkward post, a faulty product, a controversial statement. Sometimes that’s true. But in most cases we observe at Bodyguard.ai, the real fuel behind a bad buzz isn’t the initial incident — it’s what happens next in the comments.

An inclusive campaign flooded with thousands of hateful comments. A partner content creator overwhelmed by online harassment. A community space turning into a toxic battleground. A comment section so aggressive that loyal customers leave and prospects never come back. In each of these cases, the brand didn’t fail — toxicity hijacked the conversation.

In 2026, the real risk to your online reputation is no longer just what you say. It’s what others say in your spaces, under your posts. Your comments are your storefront. And if that storefront is filled with hate, insults, and harassment, your brand image suffers — even if you’re not at fault.

In this article, we analyze real-life cases where comment toxicity created or amplified reputation crises, and we draw key lessons to help you protect your conversation spaces. Not by silencing criticism — it’s legitimate and valuable — but by preventing hate from drowning out the dialogue. For a complete strategic perspective, explore our guide on online reputation: definition, risks, and protection strategies.

Table of content

Why are toxic comments the real fuel behind bad buzz?When toxic comments destroy an otherwise successful campaignHow toxicity drives a community away and damages a platformWhen raids and coordinated attacks target a brandWhen lack of moderation turns criticism into a crisisWhat key lessons can you apply to protect your conversation spaces?Why are toxic comments the real fuel behind bad buzz?

The algorithmic amplification mechanism

Social media algorithms don’t distinguish between positive and toxic engagement. A post generating 500 hateful comments is treated as “high-performing” content and pushed into the feeds of millions of additional users.

This creates a destructive feedback loop:

- A post receives toxic comments

- High engagement triggers algorithmic amplification

- The content reaches a wider audience

- More toxic comments appear

- The algorithm amplifies it even further

- The bad buzz takes off — often without the brand making any mistake

This loop can escalate within hours on platforms like TikTok or Instagram.

The spiral effect: when toxicity drives out positive voices

A well-documented phenomenon: when the first comments under a post are toxic, well-intentioned users self-censor. No one wants to engage positively in a hostile environment. As a result, only negative comments remain visible, creating a distorted perception of reality.

Studies show that:

- 78% of users avoid commenting in hostile spaces

- Positive comments drop by 60% when the first 5 comments are negative

- 1 visible toxic comment discourages an average of 4 constructive comments

This spiral completely distorts how your brand is perceived. A campaign appreciated by 95% of your audience can appear disastrous if the 5% of toxic voices dominate the conversation.

Your comments are your storefront

In 2026, consumers don’t just look at your content — they read the comments. They scroll. They form an opinion not based on what you say, but on what others say about you.

A prospect landing on your Instagram page and seeing:

- Enthusiastic comments and relevant questions → trust increases

- Insults, harassment, and spam → immediate drop-off

Your comment section has become your live social proof. If that space is toxic, no advertising campaign can compensate for that first impression.

Block toxic comments

When toxic comments destroy an otherwise successful campaign

Inclusive campaigns drowned in hate

The recurring pattern

A brand launches a campaign highlighting diversity: a plus-size model, an LGBTQ+ couple, a person with a disability. The campaign is praised by the silent majority. But the comment section fills with hateful, fatphobic, homophobic, or ableist attacks. Media outlets pick up the “controversy.” A bad buzz is born — not because the campaign failed, but because hate hijacked the conversation.

Documented examples:

- Dove campaigns promoting real bodies → waves of body shaming in comments

- Nike ads featuring Colin Kaepernick → racist comments and hateful boycott calls drowning the message

- Campaigns featuring same-sex couples → massive homophobic backlash becoming the story instead of the product

What actually happens:

- The brand launches a campaign aligned with strong values ✓

- The majority of the audience silently supports it ✓

- A toxic minority floods the comments ✗

- Media focus on the “controversy” instead of the message ✗

- The brand is perceived as “controversial” despite broad support ✗

- Future inclusive campaigns are slowed down by fear of backlash ✗

The takeaway:

These brands did nothing wrong. Their campaigns were bold and aligned with their values. What turned success into “controversy” was purely comment toxicity. If these spaces had been protected — with hateful insults filtered and harassment blocked — visible comments would have reflected reality: majority support with constructive debate. Legitimate criticism (“I don’t like this ad,” “This message doesn’t resonate with me”) would remain. Hate wouldn’t have had a platform.

This is exactly what contextual moderation enables: distinguishing criticism from harassment, preserving debate while filtering hate.

Product launches sabotaged by trolls

The pattern

A brand launches a new product. The community is excited. But trolls, disguised competitors, or hostile groups flood the comments with fake negative feedback, mockery, and misinformation. Real potential customers, seeing this hostile space, abandon their purchase.

Typical examples:

- New smartphone launch → flooded with “this is trash,” “scam,” “buy [competitor]” from suspicious accounts

- Video game release → coordinated review bombing with dozens of fake negative reviews within hours

- Beauty product launch → toxic comments targeting the founder’s appearance instead of the product

Measurable impact:

Studies show that negative comment sections reduce purchase intent by 40% among prospects who read them. Review bombing can drop a rating from 4.5 to 2.8 in 24 hours, requiring months to recover.

The takeaway:

Product launches are moments of maximum vulnerability. Your community’s excitement can be crushed in hours by a coordinated minority. Monitoring and protecting your comment spaces during these key moments isn’t censorship — it’s protection against manipulation. Genuine feedback, both positive and negative, must remain visible. Coordinated attacks and fake reviews are not legitimate.

Partner creators targeted by online harassment

The growing phenomenon

A brand collaborates with a content creator. The sponsored post attracts not product feedback, but harassment targeting the creator: personal insults, attacks on appearance, sexist or racist abuse. The creator suffers, the audience is shocked, and the brand becomes associated with a toxic environment.

The chain reaction:

- The creator feels abandoned by the brand for not protecting the space

- The creator shares their experience → the brand is seen as passively complicit

- Other creators refuse future collaborations → influencer strategy weakened

- The creator’s audience boycotts the brand → customer loss

Ecosystem impact:

In 2026, 67% of creators report experiencing harassment in sponsored content comments. 45% have declined partnerships due to fear of harassment. Comment-section toxicity has become the #1 barrier to influencer marketing.

The takeaway:

Brands that actively protect conversation spaces around collaborations gain a major competitive advantage in the creator economy. This isn’t just an ethical issue — it’s a business one. Top creators choose brands that protect their communities and invest in safe environments. Real-time AI moderation enables this by filtering harassment while preserving authentic interactions between creators and their audience.

When toxicity drives a community away and destroys a platform

Brand forums turning into lawless zones

The pattern

A brand launches a community space (forum, Facebook group, Discord) to bring users together. Without proper moderation, the space gradually deteriorates: constructive discussions are replaced by conflict, insults, and spam. The founding members — those who brought the most value — leave one by one.

The downward spiral:

- Months 1–3: Enthusiastic community, rich and constructive discussions

- Months 4–6: First trolls appear, tensions rise, volunteer moderators become overwhelmed

- Months 7–12: Growing toxicity, departure of positive members, influx of problematic profiles

- Month 12+: The space becomes toxic, the brand shuts it down → bad buzz around “censorship”

The harsh irony:

The brand ends up in a double bind. If it lets things slide, the space becomes toxic and damages its image. If it shuts it down, it’s accused of censorship and disregarding its community. In both cases, a bad buzz emerges.

The takeaway:

Moderation must be built in from day one — not added reactively once things degrade. A community space without proactive moderation is a bad buzz waiting to happen. Moderation isn’t the enemy of community — it’s the condition for its survival. The best-moderated communities are the most active, engaged, and sustainable.

Comment sections that drive customers away

The measurable pattern

E-commerce brands notice unexplained drops in conversion rates on certain products. Investigation reveals that comment and review sections have been overrun by toxicity: fake reviews, hateful comments, spam, and user conflicts.

The numbers that matter:

- 93% of consumers read comments and reviews before buying

- A toxic comment section reduces purchase intent by 40%

- Prospects spend an average of 14 seconds forming an opinion from comments

- 5 visible toxic comments can cancel out the effect of 20 positive reviews

The hidden cost:

These conversion losses are rarely attributed to comment toxicity. Brands invest in ads, SEO, UX… but overlook that their comment sections are undermining those efforts. It’s a silent bad buzz — invisible in traditional metrics, but devastating for revenue.

The takeaway:

Your comments and reviews are a conversion channel, not a secondary space. Protecting them from toxicity, spam, and fake reviews delivers direct ROI. Every toxic comment filtered is potentially a saved customer. And this doesn’t mean removing legitimate negative reviews — on the contrary, authentic negative feedback, when handled properly, builds trust.

Brand spaces hijacked by hostile communities

The pattern

Organized communities on Discord, Telegram, or 4chan target brand spaces with coordinated raids: floods of toxic comments, spam, and shocking content. The brand becomes powerless in the face of a volume of toxicity no human team can manage in real time.

Typical scenarios:

- A partner streamer is targeted on Twitch → raiders spill over into the brand’s social channels

- A brand takes a stance on a social issue → organized groups flood its comments

- A competitor orchestrates destabilization through coordinated anonymous accounts

Immediate impact:

- Real customers can no longer interact safely

- Community management teams are overwhelmed and psychologically impacted

- The brand image becomes associated with visible chaos in its comments

- Media outlets amplify the worst screenshots

The takeaway:

When facing coordinated attacks, only real-time automation can protect your spaces. No human team can moderate 10,000 toxic comments arriving at once. AI moderation can detect these coordinated attack patterns and neutralize them instantly, preserving the space for genuine community members. This isn’t censorship — it’s protecting your space when a hostile crowd tries to take it over.

When lack of moderation turns criticism into a crisis

A legitimate comment that spirals without structure

The classic pattern

A customer posts a legitimate, factual negative comment under a post. Without moderation, the situation escalates:

Phase 1 — The legitimate comment:

“I received my order two weeks late and the product was damaged. Very disappointed.”

Phase 2 — Toxic piling (without moderation):

- “Same here!!! This brand is a total SCAM”

- “Bunch of frauds, hope you go bankrupt”

- “[insults] customer service hung up on me”

- “Spread the word, BOYCOTT this brand”

Phase 3 — Viral escalation:

- Screenshot of the thread shared on Twitter → “Look how [brand] treats its customers”

- Picked up by infotainment accounts → visibility x100

- Media coverage: “Brand X faces customer backlash”

The reality:

The initial issue (a delayed package) may have affected only 0.5% of orders. But the lack of structure in the comment section turned an isolated incident into a perceived widespread crisis. The 99.5% of satisfied customers remained silent while toxicity created an alternative reality.

The takeaway:

If the conversation space had been protected from the start:

- The customer’s legitimate comment would remain visible ✓

- The brand could respond calmly and professionally ✓

- Insults and hateful boycott calls would be filtered ✓

- Satisfied customers would feel safe to speak up ✓

- The thread would reflect reality: a properly handled issue ✓

Rumors thriving in unmoderated comment sections

The pattern

A false claim about a brand appears in the comments: “I heard that [brand] uses [dangerous ingredient / child labor / illegal practice].” Without moderation or fact-checking, the rumor spreads, gets repeated, and is treated as truth by other commenters.

How rumors spread in comments:

- A comment states an unverified claim

- Others repeat it (“Yeah, I heard that too”)

- Repetition creates perceived truth

- Screenshots circulate as “evidence”

- The brand discovers the rumor once it’s already established

The takeaway:

Misinformation in your own spaces becomes your responsibility. Not because you created it, but because your platform hosted it. Active monitoring and contextual moderation allow you to detect and address false information before it solidifies. The goal is not to silence opinions — but to prevent false claims from shaping perception.

Harassment targeting visible employees in brand spaces

The growing pattern

An employee appears in a video, post, or live session. The comments fill with personal attacks: mockery of appearance, insults, sexist or racist harassment. The employee suffers. The brand is seen as passively complicit.

The chain reaction:

- The targeted employee experiences real psychological harm

- Other employees refuse to appear in future content

- The “human faces” communication strategy is abandoned

- The brand loses authenticity by hiding its teams

- Internal trust in the company declines

The takeaway:

Protecting your comment spaces also means protecting your people. In 2026, employee well-being is a critical issue. A brand that exposes its teams to harassment without protection fails in its duty of care. Real-time moderation can automatically filter targeted harassment, allowing your teams to be visible without being vulnerable.

Bodyguard moderation solution

What practical lessons can you apply to protect your conversation spaces?

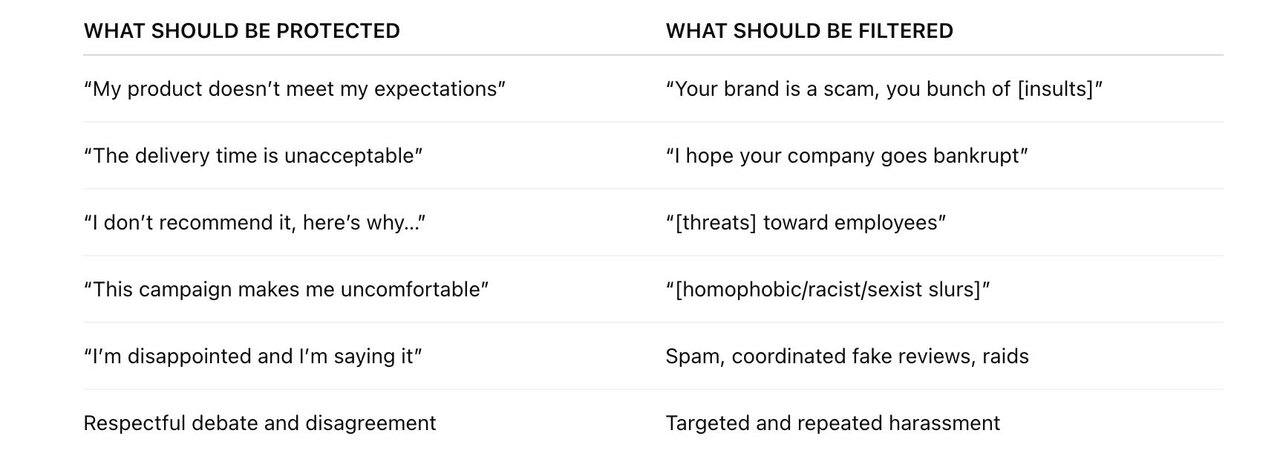

Lesson #1: Toxicity is the real enemy, not criticism

The fundamental distinction every brand needs to understand:

The left column is valuable. It informs, challenges, and pushes you to improve. The right column destroys. It drowns out legitimate voices, traumatizes teams, and artificially amplifies crises.

Our contextual analysis technology is built precisely for this distinction. It’s not a binary keyword filter — it understands context, intent, and tone, allowing you to preserve dialogue while neutralizing hate. That’s why keyword-based moderation no longer works: it simply cannot make this distinction.

Lesson #2: Proactive protection prevents 90% of crises

Every example in this article shares a common pattern: the crisis could have been significantly mitigated — or even avoided — with proactive protection of conversation spaces.

The virtuous cycle of proactive protection:

- Toxic comments are filtered in real time → the space remains healthy

- Constructive voices dominate → the atmosphere encourages positive exchanges

- Legitimate criticism remains visible → the brand can respond calmly

- Prospects see a healthy conversation space → trust increases

- Trolls and raiders get discouraged → attacks lose effectiveness

This virtuous cycle doesn’t require massive investment. It requires a decision: protect your spaces before they are attacked, not after.

Lesson #3: Your comment sections deserve as much attention as your content

Brands invest thousands in content creation: art direction, copywriting, video production, distribution strategy. Then they publish this content into completely unprotected comment spaces, where anyone can destroy weeks of work in minutes.

It’s like investing in a beautiful storefront and leaving the door open to vandals.

In 2026, content and conversation spaces are inseparable. A brilliant post with toxic comments is a failure. A simple post with positive, engaged conversations is a success.

Invest in protecting your conversation spaces with the same rigor as your content creation. The impact of toxic comments on your brand image is measurable — and significant.

Lesson #4: Transparency and protection are not contradictory

A common misconception: “If we moderate, we’ll be accused of censorship.” This confusion comes from mixing up moderation and censorship.

- Censorship: Removing negative reviews, silencing criticism, blocking dissent

- Protection: Filtering harassment, blocking insults, neutralizing coordinated attacks, preventing malicious misinformation — while fully preserving criticism, disagreement, and debate

The healthiest and most active online communities are the best moderated ones: Reddit with strict subreddit rules, Wikipedia with rigorous contribution standards, professional forums with clear guidelines.

Moderation is a sign of respect toward your community. It says: “This space matters, and we protect it so everyone can speak freely without fear of harassment.”

Lesson #5: Every bad buzz avoided is an invisible win

The most important lesson is also the hardest to showcase: the crises you prevent never make headlines. No one will know that your inclusive campaign could have turned into a controversy. No one will know that Tuesday’s coordinated raid was neutralized in 30 seconds. No one will know that the 200 hateful comments filtered this month could have driven away 500 prospects.

That’s the nature of prevention: its success is invisible. But it is real, measurable, and strategically critical.

Our dashboard helps make these invisible wins visible: volume of toxicity filtered, attacks neutralized, conversations protected. Because understanding what you’ve prevented is just as important as managing what you couldn’t avoid.

Conclusion

The examples analyzed in this article all point to one shared truth: the most dangerous bad buzz isn’t the one caused by a mistake from your brand — it’s the one fueled by the toxicity you allow to grow in your conversation spaces.

Campaigns derailed by hateful comments, communities emptied by rising toxicity, product launches sabotaged by coordinated attacks, employees harassed in full view of their employer — none of these situations were inevitable. Each could have been significantly mitigated through proactive protection of conversation spaces.

The fundamental takeaway is simple: protecting your conversation spaces is not censorship — it’s a condition for dialogue to exist at all. When toxicity takes over, there is no more conversation. No more constructive feedback. No more community. Only noise and hate.

At Bodyguard.ai, we believe every conversation space deserves protection. Our contextual analysis enables that protection with precision: criticism flows, debates thrive, disagreements are expressed. What gets filtered is hate, harassment, and manipulation — nothing else.

Brands that invest in this protection don’t silence their critics — they give them a space where they can actually be heard. And that’s exactly what builds a strong, sustainable online reputation.

To go further, explore our guide on how to prevent a bad buzz before it starts. If you’d like to see how our technology can protect your conversation spaces while preserving real dialogue, request a personalized demo.

This article is part of our online reputation series. You can also explore our guides on how to measure your online reputation.

Do you want to know more about Bodyguard?

Talk to one of our experts

© 2025 Bodyguard.ai — All rights reserved worldwide.